Of the three frameworks for evaluating individual offense, linear weights offers the simplest calculation of runs created or RAA, but will be the hardest to convert to a win-equivalent rate – mentally if not computationally. In order to do this, we need to consider what our metrics actually represent and make our choices accordingly. The path that I am going to suggest is not inevitable – it makes sense to me, but there are certainly valid alternative paths.

In attempting to measure the win value of a batter’s performance in the linear weights framework, we could construct a theoretical team and measure his win impact on it. In so doing, one could argue that the batter’s tertiary impact (which would be ignored under such an approach) is immaterial, perhaps even illusory, and that the process of converting runs to wins is independent from the development of the run estimate. Thus we could use a static approach for estimating runs and a dynamic team approach for converting those runs to wins.

I would argue in turn that the most consistent approach is to continue to operate under the assumption that linear weights represents a batter’s impact on a team that is average once he is added to it, and thus not allow any dynamism in the runs to wins conversion. Since under this school of thought all teams are equal, whether we add Frank Thomas or Matt Walbeck, there is no need to account for how those players change the run environment and the run/win conversion – because they both ultimately operate in the same run environment.

One could argue that I am taking a puritanical viewpoint, and that this would become especially clear in a case in which one compared the final result of the linear weights framework to the final result of the theoretical team framework. As we’ve seen, RAA is very similar between the two approaches, but the run/win conversions will diverge more if in one case we ignore the batter’s impact on the run environment. In any event, the methodology we’ll use for the theoretical team framework will be applicable to linear weights as well, if you desire to use it.

Since we will not be modeling any dynamic impact of the batter upon the team’s run environment, it is an easy choice to start with RAA and convert it to wins above average (WAA) by dividing by a runs per win (RPW) value. An example of this is the rule of thumb that 10 runs = 1 win, so 50 RAA would be worth 5 WAA.

There are any number of methods by which we could calculate RPW, and a couple philosophical paths to doing so. On the latter, I’m assuming that we want our RPW to be represent the best estimate of the number of marginal runs it would take for a .500 team (or more precisely a team with R = RA) to earn a marginal win. Since I’ve presumed that Pythagenpat is the correct run to win conversion, the most consistent is to use the RPW implied by Pythagenpat, which is:

RPW = 2*RPG^(1 – z) where z is the Pythagenpat exponent

so when z = .29, RPW = 2*RPG^.71

For the 1966 AL, this produces 8.588 RPW and for the 1994 AL it is 10.584. So we can calculate LW_WAA = LW_RAA/RPW, and LW_WAA/PA seems like the natural choice for a rate stat:

This tightens the gap between Robinson and Thomas as compared to a RAA/PA comparison, and since we’ve converted to wins, we can look at WAA/PA without having to worry about the underlying contextual differences (note: this is actually not true, but I’m going to pretend like it is for a little bit for the sake of the flow of this discussion).

There is another step we could take, which is to recognize that the Franks do influence the context in which their wins are earned, driving up their team’s RPGs and thus RPWs and thus their own WAAs. Again, I would contend that a theoretically pure linear weights framework assumes that the team is average after the player is added. Others would contend that by making that assertion I’m elevating individual tertiary offensive contributions to a completely unwarranted level of importance, ignoring a measurable effect of individual contribution because the methodology ignores an immaterial one. This is a perfectly fair critique, and so I will also show how we can adjust for the hitter’s impact on the team RPW in this step. Pete Palmer makes this adjustment as part of converting from Batting Runs (which is what I’m calling LW_RAA) to Batting Wins (what I’m calling LW_WAA), and far be it from me to argue too vociferously against Pete Palmer when it comes to a linear weights framework.

What Palmer would have you do next (conceptually as he uses a different RPW methodology) is take the batter’s RAA, divide by his games played, and add to RPG to get the RPG for an average team with the player in question added. It’s that simple because RPG already represents average runs scored per game by both teams and RAA already captures a batter’s primary and secondary contributions to his team’s offense. One benefit or drawback of this approach, depending on one’s perspective, is that unlike the theoretical team approach it is tethered to the player’s actual plate appearances/games. Using the theoretical team approach from this series, a batter always gets 1/9 of team PA. Under this approach, a batter’s real world team PA, place in the batting order, frequency of being removed from the game, etc. will have a slight impact on our estimate of his impact on an average team. We could also eschew using real games played, and instead use something like “team PA game equivalents”. For example, in the 1994 AL the average team had 38.354 PA/G; Thomas, with 508 PA, had the equivalent of 119.21 games for an average hitter getting 1/9 of an average team’s PA (508/38.354*9). I’ve used real games played, as Palmer did, in the examples that follow.

Applying the Palmerian approach to our RPW equation:

TmRPG = LgRPG + RAA/G

TmRPW = 2*TmRPG^.71

LW_WAA = LW_RAA/TmRPW

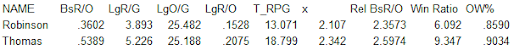

For the Franks, we get:

The difference between Thomas and Robinson didn’t change much, but both lose WAA and WAA/PA due to their effect on the team’s run environment as each run is less valuable as more are scored.

I have used WAA/PA as a win-equivalent rate without providing any justification for doing so. In fact, there is very good theoretical reason for not doing so. One of the key underpinnings of all of our rate stats is that plate appearances are not fixed across contexts – they are a function of team OBA. Wins are fixed across contexts – always exactly one per game. Thus when we compare Robinson and Thomas, it’s not enough to simply look at WAA/PA; we also need to adjust for the league PA/G difference or else the denominator of our win-equivalent rate stat will distort the relativity we have so painstakingly tried to measure.

In the 1966 AL, teams averaged 36.606 PA/G; in 1994, it was 38.354. Imagine that we had two hitters from these leagues with identical WAA and PA. We don’t have to imagine it; in 1966 Norm Siebern had .847 WAA in 399 PA, and in 1994 Felix Jose had .845 WAA in 401 PA (I’m using Palmer-style WAA in this example). It seems that Siebern had a minuscule advantage over Jose. But while wins are fixed across contexts (one per game, regardless of the time and place), plate appearances are not. A batter using 401 PA in 1994 was taking a smaller share of the average PA than one taking 399 in 1966 (you might be yelling about the difference in total games played between the two leagues due to the strike, but remember that WAA already has taken into account the performance of an average player – whether over a 113 or 162 game team-season is irrelevant when comparing their WAA figures). In 1994, 401 PA represented 10.46 team games worth of PA; in 1966, 399 represented 10.90 worth. In fact, Siebern’s WAA rate was not higher than Jose’s; despite having two fewer PA, Siebern took a larger share of his team’s PA to contribute his .85 WAA than Jose did.

If we do not make a correction of this type and just use WAA/PA, we will be suggesting that the hitters of 1966 were more productive on a win-equivalent rate basis than the hitters of 1994 (although this is difficult to prove as by definition the average player’s WAA/PA will be 0, regardless of the environment in which they played). I don’t want to get bogged down in this discussion too much, so I will point you here for a discussion focused just on this aspect of comparing across league-seasons.

There are a number of different ways you could adjust for this; the “team games of PA” approach I used would be one. The approach I will use is to pick a reference PA/G, similar to our reference Pythagenpat exponent from the last installment, and force everyone to this scale. For all seasons 1961-2019, the average PA/G is 37.359 which I will define as refPA/G. The average R/G is 4.415, so the average RPG is 8.83 and the refRPW is 9.390.

If we calculate:

adjWAA/PA = WAA/PA * Lg(PA/G)/ref(PA/G)

Then we will have restated a hitter’s WAA rate in the reference environment. This is an option as our final linear weight win stat:

This increases Thomas’ edge over Robinson, while giving Jose a miniscule lead over Siebern. As a final rate stat, I find it a little unsatisfying for a couple of reasons:

1. while the ultimate objective of an offense is to contribute to wins, runs feels like a more appropriate unit

2. related to #1, wins compress the scale between hitters. There’s nothing wrong with this to the extent that it forces us to recognize that small differences between estimates fall squarely within the margin of error inherent to the exercise, but it makes quoting and understanding the figures more of a challenge.

3. WAA/PA, adjusted for PA context or not, is only differentially comparable; ideally we’d like to have a comparable ratio

My solution to this is to first convert adjusted WAA/PA to an adjusted RAA/PA, which takes care of objections #1 and 2, then to convert it to an adjusted R+/PA, which takes care of objection #3. At each stage we have a perfectly valid rate stat; it’s simply a matter of preference.

To do this seems simple (let’s not get too attached to this approach, which we’ll revisit in a future post):

adjRAA/PA = adjWAA/PA*refRPW (remember, by adjusting WAA/PA using the refPA/G, we’ve restated everything in the terms of the reference league)

adjR+/PA = adjRAA/PA + ref(R/PA) (reference R/PA is .1182, which can be obtained by dividing the ref R/G by the ref PA/G)

We can also compute a relative adjusted R+/PA:

reladjR+/PA = (adjR+/PA)/(ref(R/PA))

= ((RAA/PA)/TmRPW * Lg(PA/G)/ref(PA/G) * refRPW + Ref(R/PA))/Ref(R/PA)

= (RAA/PA)/TmRPW * Lg(PA/G)/ref(PA/G) * refRPW/ref(R/PA) + 1

I included raw R+/PA and its ratio the league average (relative R+/PA) to compare to this final relative adjusted R+/PA. For three of the players, the differences are small; it is only Frank Robinson whose standing is significantly diminished. This may seem counterintuitive, but remember that the more ordinary hitters have much smaller impact on RPW than the Franks. Relative to Thomas, Robinson gets more of a boost from his lower RPW (his team RPW was 19% lower than Thomas) than Thomas does from the PA adjustment (the 1994 AL had 4.8% more PA/G than the 1966 AL).

We could also return to the puritan approach (which I actually stubbornly favor for the linear weights framework) and make these adjustments as well. The equations are the same as above except where we use TmRPW, we will instead use LgRPW – reverting to assuming that the batter has no impact on the run/win conversion.

Here the impact on the Franks is similar; both are hurt of course when we consider their impact on TmRPW. Next time, we will quit messing around with half-measures – no more mixing linear run contributions with dynamic run/win converters. We’re going full theoretical team.